Opened Captions

Opened Captions makes it possible to write code that knows what is being said, in real time, on broadcast television.

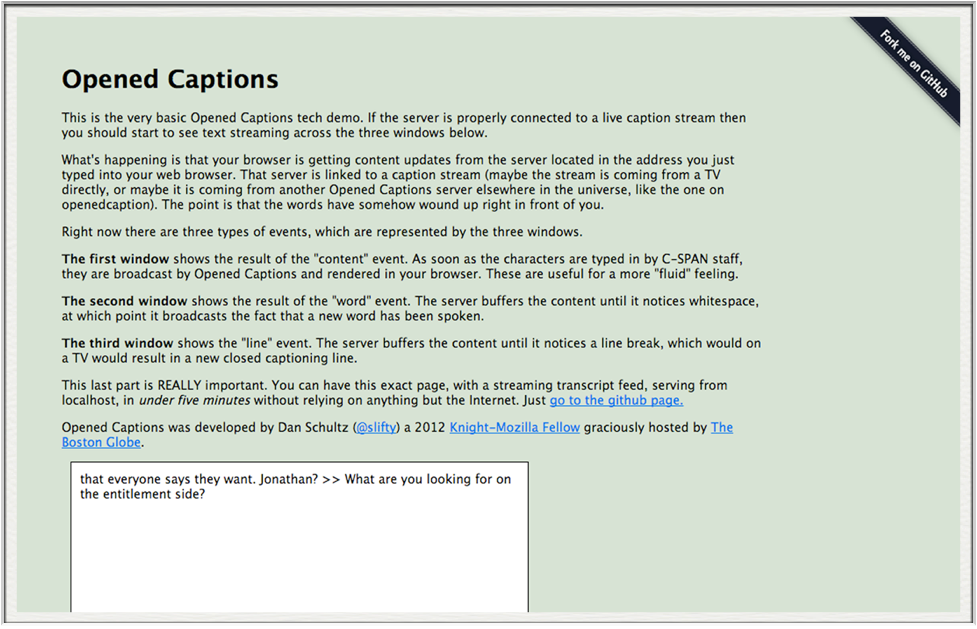

Long Description

You may have heard of closed captions before: they’re the little words that show up at the bottom of TVs in airports, bars, or maybe at home when you mute the TV. Opened Captions scrapes those captions and regurgitates them into something called a web socket (web sockets take data and pass it along to anything willing to listen.) The service can take in caption data directly from a USB, or from another opened captions server.

This project came from my Knight-Mozilla fellowship at the Boston Globe during the 2012 elections. We were brainstorming innovative ways to cover the presidential debates, and quickly realized that the best of them required a way to know what was being said in real time. I got to work looking for solutions and, finding none, decided to make my own. A few days later and Opened Captions was born.

Although we didn’t end up building any official Boston Globe coverage, I did manage to throw together a wonderful project called DRUNK-SAPN. The application streamed the debates and told the world to drink on key phrases. Most importantly, the transcript took shots along with the players. You can view the transcript here.

Papers, Posts, and Press

- Introducing Opened Captions (slifty.com)

- Opened Captions: Turning the spoken words on TV screens into streams of hackable data (Nieman Lab)

- Introducing Opened Captions: A SocketIO API for live TV closed captions (Source)

- Opened Captions (Slideshare)

Technologies

- Node.js

- Socket.io

Testimonials

h*hic*Tey brougttth*hic* su binder*hic*s ffflul fo wmoen.

– Mitt Romney